Will AI-Managed Forensic Sciences Keep Us Safe?

CSI: Crime Scene Investigation launched in 2000, followed within a few years by the ‘CSI effect,’ unrealistic expectations that forensic sciences are absolute sciences that produce foolproof results. Juries conditioned by decades of watching CSI-style shows demand high-tech forensic evidence. If it’s lacking, for whatever reason, they incline toward the defense. If it’s presented, they believe it absolutely and so favor the prosecution.

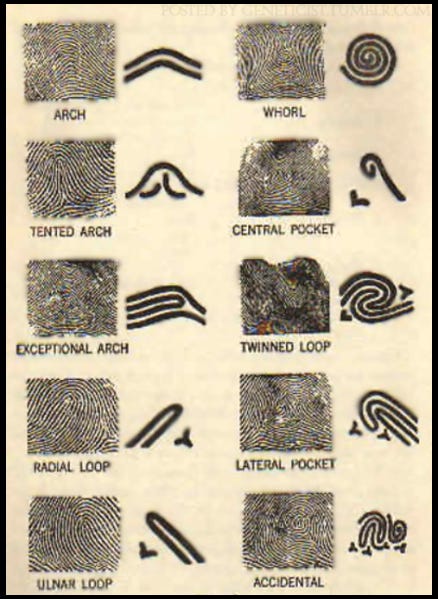

Is this faith in forensic sciences justified? Take fingerprinting. It’s been a respected technique in criminal investigations for over a century. Now the FBI has added AI to expand its Integrated Automated Fingerprint Identification System into Next Generation Identification, the world’s largest electronic repository of biometric and criminal history information.

The FBI’s Biometric Technology Center, a 360,000-square-foot facility in West Virginia, houses 189 million master prints at last count. This repository comes not just from criminal investigations but from civil records as well, like for employment, licensing or adoptions. Sounds like a foolproof system for identifying perpetrators, right?

But after the algorithm sorts through millions of prints, a match has to be confirmed by human experts. Studies have found that human experts make mistakes. While an expert compares ridge endings, pores and other pattern similarities among the whorls, loops and arches supposedly unique to every individual, there’s no standard as to how many identical points must exist. It could be 3 or it could be 16.

Then there’s the problem of partial prints from crime scenes compared to full prints in the database. Add on issues around latent prints, invisible impressions left by moisture or oils. These prints can be damaged during collection or degraded by weather or time. In fact fingerprints can’t be dated, so a match does not prove when the suspect was present at the scene. Don’t even go into the methods criminals can use to alter their fingerprints.

A 2011 FBI study gave experts a group of prints to analyze. 85% missed points. When given a chance to reconsider their work only 10% changed their conclusions. This system churns out a fair number of mistakes or ‘false positives.’

A famous case occurred after the Madrid train bombing of 2004. Spanish police sent prints to the FBI to compare with their database. Four experts confirmed a match of the prints to an Oregon lawyer named Brandon Mayfield. After Mayfield spent a few weeks in jail, Spanish police located an Algerian suspect. It turned out Mayfield didn’t even own a passport.

A Georgia man, Dwight Gomas, spent 17 months on Riker’s Island charged with a robbery after his prints were matched to crime scene data. When the error was finally discovered they realized Gomas had been in Atlanta when the crime occurred. The city had to pay him $145 million in damages.

Fingerprinting has been utilized in criminal courts since the 1910 murder of Clarence Hiller where police found a set of prints in some wet paint on the back porch. Four experts linked Thomas Jennings to the prints and he was convicted. On appeal he said the prints were improperly admitted, but the appeal court cited Encyclopedia Britannica to conclude fingerprinting was endorsed by ‘scientific authorities.’ That shoehorned the practice into courts and once in, the system of judicial precedent perpetuated it.

While fingerprinting is imperfect, many other forensic sciences initially accepted as reliable were later shown to be false. Take hair microscopy. From the 1950s hair found at crime scenes would be compared to suspects’ hair under microscopes. The FBI trained local investigators in the technique. A 2015 review found that in over half of cases where such evidence had helped to produce a conviction, testifying experts made erroneous statements. By 2016 James Comey sent letters to governors saying they should review all cases where the technique had been used. Only 17 did so.

Similar cycles of initial acceptance, decades of use leading finally to revelations of unreliability are found with bite-mark analysis, handwriting analysis, footprint and tire mark analysis, blood spatter analysis, lie detectors and truth serum. For example, experts claimed near 100% reliability for lie detectors until shown to produce accuracy only slightly better than chance.

Arturo Casadevall, MD of the Johns Hopkins Bloomberg School of Public Health says, “Many of the forensic techniques used today to put people in jail have no scientific backing.” Yet getting convictions based on junk science reversed is tough, in part because authorities fear setting a precedent that might require them to throw out huge numbers of other convictions based on the same ‘science.’

Despite this abysmal history we still rally around the ‘scientific’ techniques of crime scene investigation. Obviously DNA analysis changed the game but there are issues with that as well because nothing humans do is 100% anything. Forensic sciences were supposed to make Holmes-style interpretation of signs obsolete, yet in the current crisis the emerging field of forensic semiotics is attracting interest. The FBI’s Next Generation Identification seduces with the fantasy that machine-managed data reservoirs will solve crimes perfectly so we can all be safe forever. But given what’s happened with so many forensic sciences, should we at least interrogate that idea? What do you think?

*Never* trust anything generated by "AI". There are some, limited circumstances where automated pattern recognition is very helpful and indicative to humans but beyond that, no.

Too many folks believe what they see on movies and television shows. I cringe at instant results and complete faith in forensics. Sometimes yell at my screen - It doesn't work like that! So many tools are possibly useful guideposts to narrow the field of suspects - maybe.